BIG DATA JOB DESCRIPTION

Big Data enables organizations to process and analyze massive volumes of structured and unstructured data, delivering real-time insights, scalable solutions, and data-driven decision-making across industries.

An Overview of Big Data Job Description Responsibilities and Qualifications

1. The Big Data Analyst collects, processes, and analyzes large datasets to generate actionable insights. This role builds reports and dashboards, identifies trends, ensures data quality, and collaborates with cross-functional teams to support data-driven decisions and improve data platforms.

Big Data Analyst Functions:

- Gathering data from various sources, and then cleansing, organizing, processing, and analyzing it to extract valuable insights and information

- Working with various database types to handle large amounts of data, in structured, semi-structured and unstructured form

- Developing reports and dashboards (charts, tables, ...) with data analysis results to help different audiences make better decisions

- Identifying, analyzing, and interpreting trends or patterns in complex data sets

- Understanding sources and lineage of data to control the quality of our data sets

- Collaborating with team members to prioritize business and information requirements

- Improving the company data lake and data marts

- Performing routine analysis tasks to support day-to-day business

- Applying statistical analysis methods for consumer data research and analysis purposes

- Closely collaborating with both the IT teams and customer-facing teams to accomplish company goals and prove value to our customers

Big Data Analyst Knowledge, Experience and Requirements:

- Experience in data analysis

- Strong analytical skills with the ability to collect, organize, analyze, and disseminate significant amounts of information with attention to detail and accuracy

- Experience with some programming languages: Python, Java, C#, ...

- Experience with databases and SQL/NoSQL

- Working proficiency in written and spoken English

- Enthusiasm for teamwork, constant learning, and adapting to new circumstances

- Technical expertise with data models, database design, data mining and segmentation techniques

- Experience with the Python data science stack (pandas, numpy, ...) and/or writing complex SQL queries

- Knowledge of statistics and experience with statistical packages for analyzing datasets

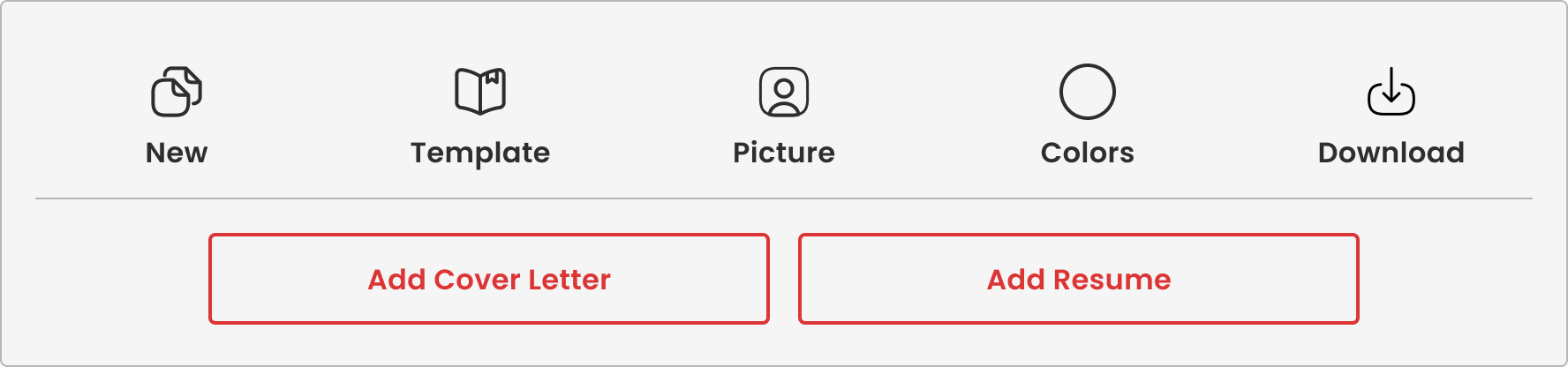

To showcase analytical expertise effectively, a strong Big Data Resume highlights technical skills and improves chances of securing data analyst roles.

2. The Big Data Architect leads the design and development of a cloud-based data platform that processes real-time data from healthcare systems and connected devices. Working closely with cross-functional teams, this role builds scalable streaming architectures to generate actionable insights and benchmarking capabilities. It requires strong technical leadership, architectural vision, and hands-on expertise in distributed data systems within a fast-paced, innovation-driven environment.

Big Data Architect Responsibilities:

- Defines technology roadmap in support of product development roadmap

- Lead the design, architecture and development of multiple real time streaming data pipelines encompassing multiple product lines and edge devices

- Ensure proper data governance policies are followed by implementing or validating data lineage, quality checks, classification, etc.

- Provide technical leadership to agile teams onshore and offshore: Mentor junior engineers and new team members, and apply technical expertise to challenging programming and design problems

- Resolve defects/bugs during QA testing, pre-production, production, and post-release patches

- Have a quality mindset, squash bugs with a passion, and work hard to prevent them in the first place through unit testing, test-driven development, version control, continuous integration and deployment.

- Ability to lead change, be bold, and have the ability to innovate and challenge status quo

- Conduct design and code reviews

- Analyze and improve efficiency, scalability, and stability of various system resources

- Operate within Agile Development environment and apply the methodologies

- Track technical debt and ensure unintentional technical debt is not created

- Recommends improvements to the software delivery cycle to help remove waste and impediments for the team

- Drives, promotes and measures team performance against the sprint and project goal.

- Works with the team to continuously improve in development practices and process

- Troubleshoots complex problems with existing or newly-developed software

- Mentoring and coaching of Software Engineers

Big Data Architect Qualifications:

- Expert knowledge of data architectures, data pipelines, real time processing, streaming, networking, and security

- Proficient understanding of distributed computing principles

- Advanced knowledge of Big Data querying tools, such as Pig or Hive

- Expert understanding of Lambda Architecture, along with its advantages and drawbacks

- Proficiency with MapReduce, HDFS

- Experience with integration of data from multiple data sources and multiple data types

3. The Big Data Consultant partners with business and technical stakeholders to design and deliver scalable data solutions that meet analytics and operational needs. This role translates business requirements into end-to-end technical implementations, develops data integration and migration strategies, and ensures high-quality design documentation. Leveraging modern big data and cloud technologies, the consultant drives solution architecture, guides development teams, and delivers reliable, cost-effective data platforms in complex environments.

Big Data Consultant Details:

- Work with business and functional stakeholders to understand data requirements and downstream analytics needs.

- Responsible for ratifying technology solutions, producing concise Design documents, including Source to Target mappings, data manipulation / processing logic, contributing to work estimates

- Create Design Documentation and approach for successful program delivery.

- Understand different project methodologies, project lifecycles, major phases, dependencies and milestones within a project, and the required documentation needs.

- Translate business requirements & E2E designs into technical implementations based on system capabilities.

- Implement Data integration and Data Warehouse based solutions using open source big data tools and cloud-native technologies

- Create a solution for data and process migration from source to destination (on-premise to cloud, cloud to cloud, or to a data platform) without business impact.

- Ensure consistency in approach, design, and output to deliver quality and concise design solution documentation.

- Define, and promote re-usable, extendible, scalable, maintainable solutions considering the trade-off for cost vs benefit

- Communicate at all levels in a clear and credible way about the importance of solution design.

- Lead developers in the team and foster a shared vision for design.

Big Data Consultant Requirements:

- Prior exposure in successfully leading the development & delivery of data applications

- Exposure to Software Development Solutions and Design specification/documentation

- Experience in Python, SQL, PySpark, Map-reduce, Hive, Hbase, Airflow

- Good understanding of big data workloads on one or more cloud platforms like AWS, GCP, Azure

- Solution delivery for data processing components in larger End to End projects

- Stakeholder management includes persons working at higher grade

- Understanding data transformation, MI/BI reporting, Data Warehouse Concepts, Data Relational Models

- Ability to think logically and articulate process designs

- Good attention to detail/hands-on with excellent organizational skills

- Ability to collaborate across teams to deliver complex systems and components and manage stakeholder’s expectations well

- Experienced with planning, estimating, organizing, and working on multiple projects

Strong collaboration and delivery capabilities require solid Big Data Skills and Experience to effectively manage stakeholders and execute complex data projects.

4. The Big Data Developer designs, builds, and optimizes scalable batch and real-time data pipelines using modern big data technologies. This role develops high-performance streaming and ETL solutions, translates business requirements into technical implementations, and ensures data quality through testing and deployment support. Working in an Agile environment, the developer collaborates with cross-functional teams to deliver efficient, reliable data systems and continuously improve performance and scalability.

Big Data Developer Key Responsibilities:

- Hands on Big Data Sr developer role (Spark Structured streaming + Spark SQL + Kafka)

- Actively participate in scrum calls, story points, estimates and own the development piece.

- Provide technical assistance to team and involve in efficient design

- Analyze the user stories, understand the requirements and develop the code as per the design

- Develop test cases, perform unit testing and integrating testing

- Support QA Testing, UAT and production deployment

- Develop batch and real-time data load jobs from a broad variety of data sources into Hadoop. And design ETL jobs to read data from Hadoop and pass to variety of consumers / destinations.

- Perform analysis of vast data stores and uncover insights.

- Analyze the long running queries and jobs, performance tune them by using query optimization techniques and Spark code optimization.

Big Data Developer Skills, Experience and Qualifications:

- 8 to 10 years of total IT experience including 5+ years of Big Data experience

- Experience in Spark Structured Streaming, Kafka, Spark SQL, Scala are must

- Experience on Databricks, Azure Data Factory, Azure Datalake service

- Experience in building real time data streaming pipelines from Kafka (or any message broker) using Spark Structured Streaming

- Hands on functional programming like Scala, Python or Java 8 prior to Big Data projects.

- Experience in designing Big Data projects, designing data models on Hive and HBase for high-performance and storage.

- Executed at least one end to end Hadoop data lake projects (streaming real time data) and led the developers’ team.

- Proficient in Linux/Unix scripting.

- Experience in Agile methodology is a must.

- Knowledge of standard methodologies, concepts, best practices, and procedures within HDF Big Data environment

- Self-starter and able to independently implement the solution.

- Good problem-solving techniques and communication

- Hands on experience with project management software, like MS Project

- Excellent verbal and written communication

- Excellent organization and time management skills

5. The Big Data Engineer designs and builds scalable, fault-tolerant data platforms and pipelines to process large volumes of structured and unstructured data. This role develops and integrates data solutions using modern big data technologies, ensures system performance and reliability, and supports cross-functional teams with accessible, high-quality data. Working with diverse data sources and tools, the engineer continuously optimizes infrastructure and stays current with evolving data technologies.

Big Data Engineer Duties:

- Use big data technologies to develop distributed, fault-tolerant scalable data solultions.

- Collect and process data at scale from a variety of sources for different project needs.

- Participate in identifying, evaluating, selecting and integrating big data frameworks and tools required for the big data platform.

- Design, develop, and maintain data pipelines , data platforms using selected frameworks and tools based on requirements from different projects.

- Convert structured and unstructured data in to the form that is suitable for processing. Provide support to different teams in analysizing data.

- Design, develop and maintain data API’s.

- Integrate data from variety of data sources using federation techniques.

- Develop solutions independently based on high-level design and architecture with minimal supervision.

- Monitor the performance of the big data platform on a regular basis and tune the infrastructure and platform components accordingly to ensure the best performance.

- Maintain a high level of expertise in data technologies and stay current on latest data technologiess.

Big Data Engineer Experience and Requirements:

- Bachelor’s Degree or higher in Computer Science or a related field.

- Overall 7+ year experience in software development.

- 3+ years of experience in data engineering.

- Prior experience with implementing big data platform components that are scalable, high performing, and lower in operations cost.

- Proven experience with integration of data from multiple heterogeneous and distributed data sources.

- Experience with processing large amounts of data (structured and unstructured.

- Experience in production support and troubleshooting.

- Hands-on knowledge of containers, API designing and implementing is a must.

- Experience with NoSQL databases, Graph databases, relataional databases, time series databases.

- Excellent knowledge of various ETL techniques and frameworks, various messaging systems, stream-processing systems, Big data ML toolkits, big data querying tools

- Experience in Python, Go, Perl, Javascript, Kafka, Spark, Kubernetees.

- Good knowledge of Agile software development methodology.

- Excellent interpersonal, communication (verbal and written) skills.

- Proven experience in managing and working with teams based in multiple geograhies.

6. The Big Data Software Engineer designs and develops large-scale data-driven applications and analytics solutions using modern big data, machine learning, and streaming technologies. This role builds robust, scalable data infrastructure, collaborates with analysts to generate actionable insights, and leads projects from concept through production. It requires strong software engineering fundamentals, a passion for innovation, and the ability to deliver high-performance systems in a fast-paced environment.

Big Data Software Engineer Functions:

- Design and build massive Big Data analytical solutions utilizing graph, machine learning and text mining algorithms.

- Design and build Data infrastructures and tools leveraging Big Data industry standards and cutting edge frameworks

- Work side by side with analysts to extract meaningful and actionable insights from PayPal data.

- Lead analytical projects from inception through research, development and all the way to production on PayPal’s data processing infrastructure

- passionate for about technology and for developing robust, scalable, state of the art software systems

- highly motivated, goal driven and have posses a can-do approach

- Innovative, entrepreneurial, team player, great at Ability to multi-tasking, curious and open minded

Big Data Software Engineer Requirements and Qualifications:

- B.Sc. in computer sciences/ mathematics or equivalent; or IDF technological unit technology experience

- Proven development experience in Java or/ Scala

- 4+ years' experience building production software systems

- Linux / other *nix - hands-on experience

- Hands on Some experience with different databases solutions (SQL/NOSQL)

- Excellent English (written and verbal)

- Hands on experience with Big Data and Streaming technologies: Hadoop / Spark / ElasticSearch

- Design and architecture experience, as well as knowledge and experience with object oriented design patterns

- Experience working on large-scale application deployments and performance tuning.

- Experience in text mining/machine learning / graph algorithms

Professionals with strong distributed systems experience can follow the Big Data Career Guide to advance into scalable data engineering roles.

7. The Senior Big Data Software Engineer designs and delivers scalable data applications and pipelines that transform large volumes of structured and unstructured data into actionable insights. This role applies strong software engineering practices to data and machine learning systems, supports model development and deployment, and ensures reliability through CI/CD and automation. Working with modern cloud and big data technologies, the engineer drives end-to-end solutions from prototype to production in a collaborative, Agile environment.

Senior Big Data Software Engineer Duties:

- Define data requirements, gather and mine large scales of structured and unstructured data, and validate data using various data tools in the Big Data Environment

- Design and develop big data applications based on business requirements defined by research teams or business units

- Migrate or refactor big data proof of concept engagements to production applications using best practices and CI/CD standardization

- Develop and orchestrate big data pipelines that turn large scale raw data into meaningful units of analysis that pertain to specific research or data science problems.

- Apply software engineering rigor and best practices to machine learning, including version control, CI/CD, automation, etc.

- Facilitate the development and deployment of proof-of-concept machine learning systems

- Support model development, with an emphasis on auditability, versioning, and data security

- Leverage cloud technologies, namely Azure, to develop, deploy, manage, and govern DS and ML workflows or supporting resources

Senior Big Data Software Engineer Experience and Qualifications:

- Bachelors’ in Computer Science, Math or Scientific computing preferred.

- At least 5 years of recent experience in data engineering or data-oriented software development using Big Data frameworks and tools

- Fluency in Python, Bash, Pyspark and SQL

- Extensive experience in distributed compute environments, preferably Spark

- Experience with Big Data Cloud Environments such as Snowflake and Databricks

- Comfort with Linux administration

- Strong understanding of software testing, benchmarking, and continuous integration

- Ability to translate business needs to technical requirements

- Experience developing and maintaining ML systems built with open-source tools (MLFlow, specifically, is a big plus)

- Experience building custom integrations between cloud-based systems using APIs

- Strong software engineering skills in complex, multi-language systems

- Experience working within Agile Software Development cycles

8. The Big Data Solution Architect defines and leads the architecture of scalable data platforms and solutions that address complex business and analytics needs. This role partners with cross-functional teams to design and deliver data-driven products, guide development efforts, and ensure alignment with technical strategy. Combining hands-on expertise with leadership, the architect drives innovation, oversees solution delivery, and enables high-performing data systems in an Agile environment.

Data Solution Architect Roles:

- Manage and lead the Global Prospect database Design and Development

- Build and Enhance the existing capabilities and provide ongoing support

- Discover and Analyze the prioritized features to be implemented on an ongoing basis

- Maintain, Support, and continuously enhance prospect matching algorithms based on evolving needs

- Partner with business, analytics and machine learning teams to identify business problems and design big data and/or real-time solutions.

- Manage and execute on the opportunity backlog, analyze, and lead the build of prioritized stories

- Define technical architecture for new and existing solutions, and inform all development activities to align with the same

- Create a culture of innovation and experimentation, support full software development lifecycle that incorporates the best of technology approaches and delivery methodologies

- Lead a team of developers, engaging with them in day to day activities and helping in review of design and codes.

Data Solution Architect Experience and Requirements:

- Bachelor’s in Information Technology, Computer Science, Mathematics, Engineering or equivalent

- 3+ years of experience in managing product or engineering teams, delivering business solutions across a variety of platforms and technologies such as Big Data, Pyspark, Hive, Scala, Python

- Experience in Agile Scrum methodology or the Software Delivery Life Cycle.

- Experience in designing scalable solutions and lead implementation of complex data products

- Hands on Experience with reporting, designing APIs, developing user interfaces, web services application architectures and microservices application architecture is preferred

- Strong program management, analytical & problem-solving skills

- Ability to think abstractly and deal with ambiguous/under-defined problems

Build a Job-Winning Big Data Resume Builder Today

Editorial Process and Content Quality

This content is part of Lamwork's career intelligence platform and is developed using structured analysis of real-world job data, including publicly available job descriptions, skill requirements, and hiring patterns.

Lam Nguyen, Founder & Editorial Lead, defines the research framework behind Lamwork's career intelligence platform, including job role analysis, skills taxonomy, and structured career insights.

All content is reviewed by Thanh Huyen, Managing Editor, who oversees editorial quality, content consistency, and alignment with real-world role expectations and Lamwork's editorial standards.

Content is developed through a structured process that includes data analysis, role and skill mapping, standardized content formatting, editorial review, and periodic updates.

Content is reviewed and updated periodically to reflect changes in skills, role requirements, and labor market trends.

Learn more about our editorial standards.