BIG DATA CAREER GUIDE

Big Data job description guide covering responsibilities, skills, qualifications, resume proof, and business impact across data roles.

Big Data Responsibilities, Skills and Career Overview

1. Big Data Definition

Big Data is a role focused on collecting, storing, processing, and analyzing very large structured and unstructured datasets so organizations can generate actionable insights, support real-time processing, and improve data-driven decisions. It works across business, IT, analytics, engineering, and customer-facing teams to build scalable data platforms, pipelines, reporting systems, and architecture that improve performance, reliability, and business outcomes.

Understanding cross-functional data workflows, a clear Big Data Job Description defines responsibilities that ensure scalable systems and reliable data-driven decision making.

2. Big Data Roles and Responsibilities

Data analysis, reporting, and insight delivery

Big Data work includes gathering data from multiple sources, cleansing and organizing it, analyzing trends and patterns, preparing reports and dashboards, supporting day-to-day analysis, and turning large datasets into usable insights for business and downstream users.

Data pipelines, platforms, and integration

The role designs, builds, maintains, and optimizes batch and real-time data pipelines, ETL jobs, streaming workflows, APIs, data lakes, data marts, and cloud or Hadoop-based platforms that process structured and unstructured data at scale.

Architecture, governance, and performance

Big Data responsibilities include defining data architecture, technology roadmaps, data governance practices, lineage, quality checks, classification, disaster recovery, platform monitoring, scalability improvement, and performance tuning across pipelines, clusters, and system resources.

Collaboration, delivery, and technical leadership

Big Data professionals work with business, functional, analytics, machine learning, IT, engineering, and customer-facing teams to translate requirements into technical solutions, guide developers, support Agile delivery, conduct reviews, mentor engineers, and communicate solution design clearly.

3. Essential Skills & Qualifications

Core skills include Scrum expertise, architecture review, DevOps supportability, integration development, ETL design, cluster management, streaming applications, system architecture, CI/CD implementation, Python development, technical leadership, mentoring, conflict resolution, solution oversight, technical support, Agile development, problem solving, code quality, sprint planning, and cross-functional collaboration.

Hard skills include Hadoop, Spark, Hive, Kafka, HDFS, MapReduce, SQL, NoSQL, Java, Scala, Python, R, shell scripting, cloud platforms such as AWS, Azure, and GCP, data warehousing, data modeling, ETL/ELT workflows, dashboards, reporting tools, APIs, CI/CD pipelines, containers, microservices, and performance monitoring.

Soft skills include analytical thinking, communication, presentation, collaboration, flexibility, creativity, time management, stakeholder management, ownership, mentoring, troubleshooting, and the ability to work across teams, priorities, and changing business needs.

Qualifications shown in the sources include degrees in Computer Science, Data Science, Statistics, Information Systems, Applied Mathematics, Software Engineering, Computational Science, Business Analytics, Artificial Intelligence, Big Data Analytics, Economics, or Mathematics, with experience levels ranging from 3 to 10 years depending on the role.

Developing a strong technical foundation requires comprehensive Big Data Skills and Experience to effectively handle complex data systems and evolving business needs.

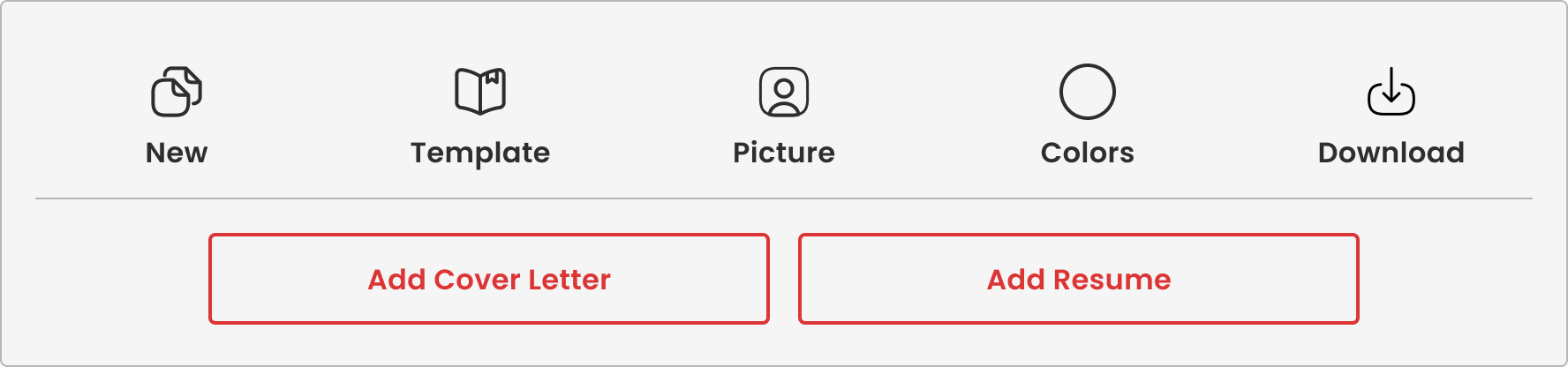

4. Big Data Resume Guide

A strong Big Data resume should show ownership of architecture, data solutions, ETL automation, streaming applications, system support, CI/CD, performance monitoring, data security, Spark jobs, Python services, unit testing, DevOps work, and cross-functional engineering collaboration.

Leadership signals include mentoring teams, reviewing design and code, resolving scrum dependencies, driving Agile and DevOps adoption, acting as a subject matter expert, guiding solution implementation, and supporting teams through testing, deployment, and incident handling.

5. Final Insight

Big Data matters because it connects large-scale data collection, processing, architecture, analytics, and delivery into systems that support real-time insights, predictive analytics, reporting, platform reliability, customer value, and competitive business outcomes.

Editorial Process and Content Quality

This content is part of Lamwork's career intelligence platform and is developed using structured analysis of real-world job data, including publicly available job descriptions, skill requirements, and hiring patterns.

Lam Nguyen, Founder & Editorial Lead, defines the research framework behind Lamwork's career intelligence platform, including job role analysis, skills taxonomy, and structured career insights.

All content is reviewed by Thanh Huyen, Managing Editor, who oversees editorial quality, content consistency, and alignment with real-world role expectations and Lamwork's editorial standards.

Content is developed through a structured process that includes data analysis, role and skill mapping, standardized content formatting, editorial review, and periodic updates.

Content is reviewed and updated periodically to reflect changes in skills, role requirements, and labor market trends.

Learn more about our editorial standards.